.png)

Apr 28, 2026

Most AI hiring tools speed up workflows but don’t improve decisions. This article explores why hiring is fundamentally a decision problem, and how KYNAA brings structure, consistency, and intelligence to it.

Somewhere in your organization, an engineer is choosing a cloud provider for an AI workload. A procurement team is signing a compute agreement. A finance team is approving a GPU budget using a framework built for software licensing.

None of these decisions will appear on a board agenda. Yet all of them will determine whether your AI strategy is profitable three years from now.

This is the governance gap that will separate AI leaders from AI casualties in the next phase, not model selection, not data strategy, and not talent.

When executives discuss AI today, the conversation typically revolves around capability reviews, use case inventories, governance frameworks, and risk controls. The focus is on what the models can do, what they cannot do, where they are being deployed, and how they are being governed.

What the conversation almost never includes is the economics of running these systems continuously at scale as workloads grow more complex.

That omission is about to become expensive.

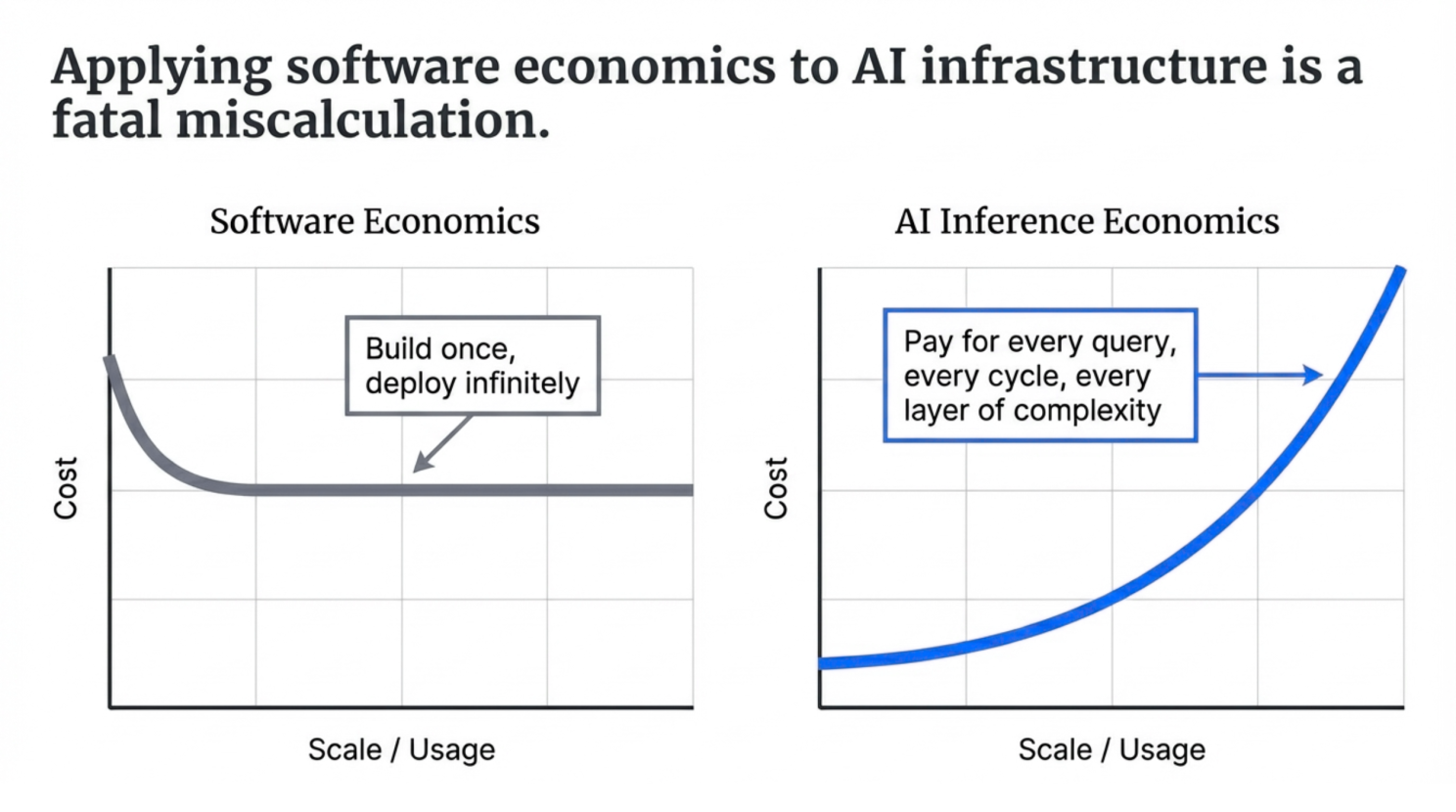

The economics of AI are fundamentally different from the economics of software.

And these costs do not scale linearly. They compound.

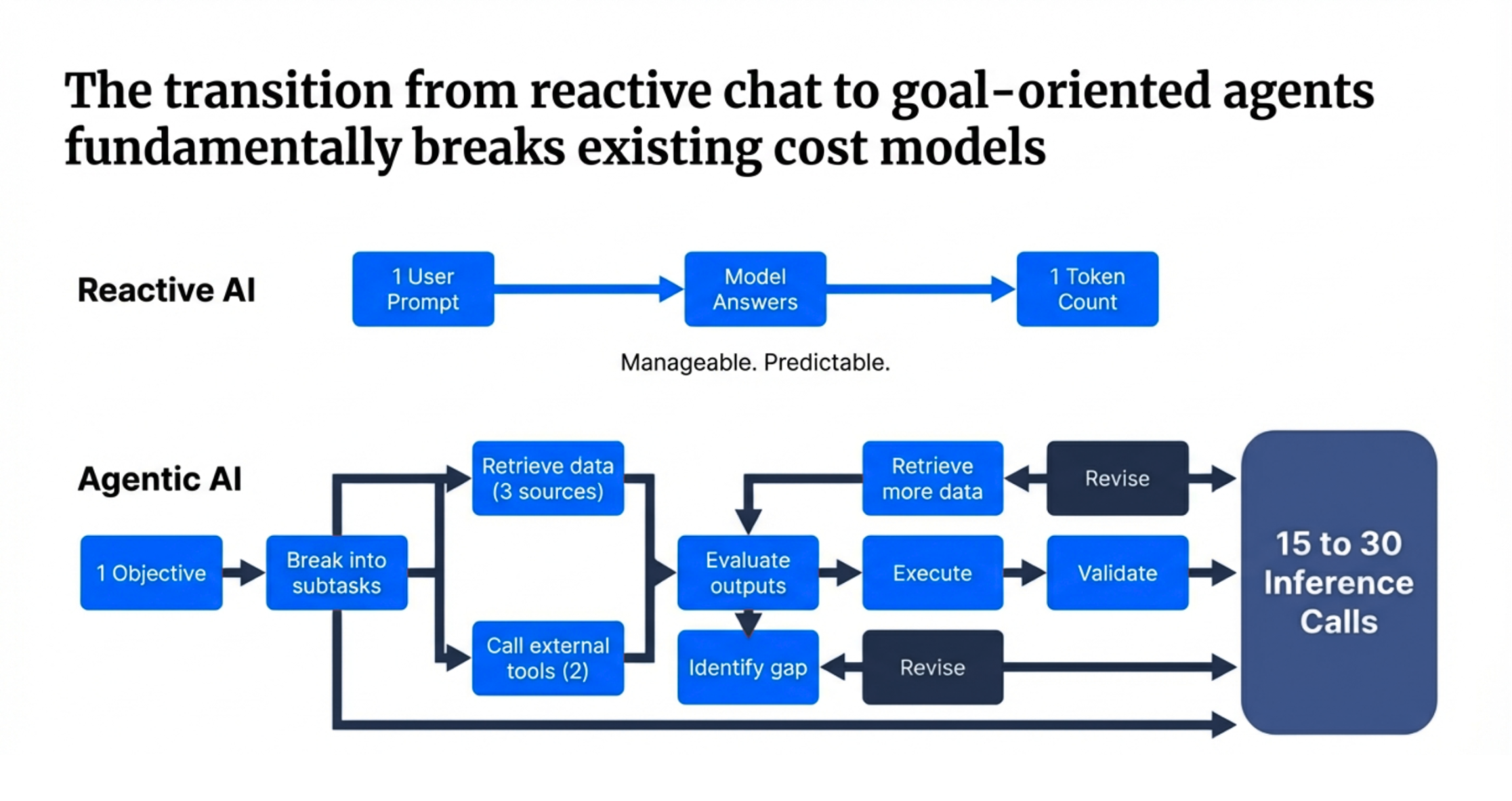

Most organizations today are operating what might be called first-generation AI systems: reactive systems. A user asks a question, the model responds, and the cost is measured in tokens. This model is manageable, predictable, and relatively easy to estimate.

But the systems now being deployed are agentic. They do not simply answer questions. They pursue objectives.

A single agentic task might involve:

Now multiply that by your workforce, by your customer interactions, and by the ambition of your AI roadmap.

The cost dynamics change quickly. In an agentic workflow, token consumption scales with the complexity of the problems the system is trying to solve. And complexity is precisely what organizations are deploying AI agents to handle.

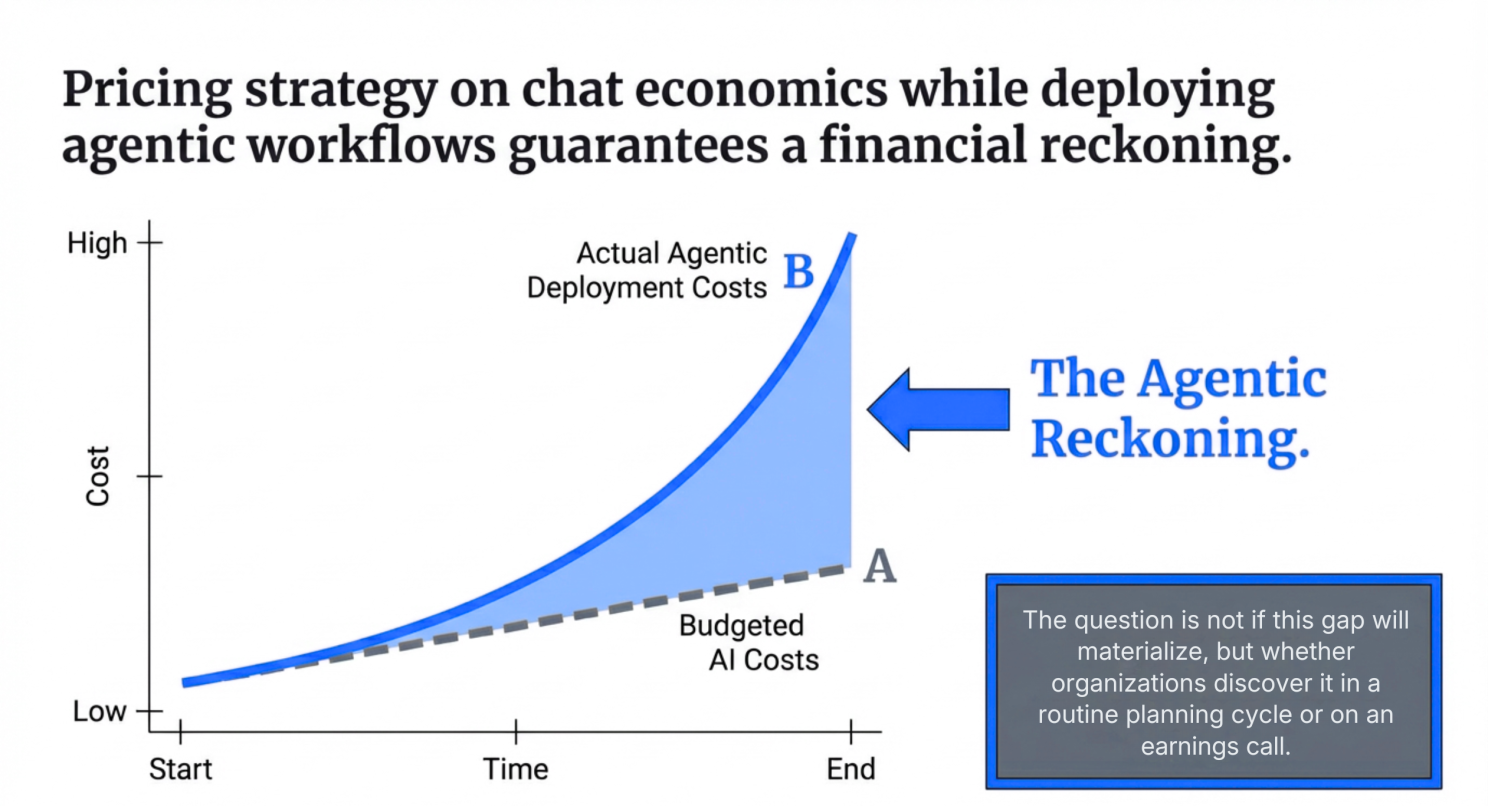

Organizations that priced their AI strategies based on chat-style economics will face a reckoning when agentic systems begin operating at scale. The real question is whether that realization arrives during a planning cycle, or during an earnings call.

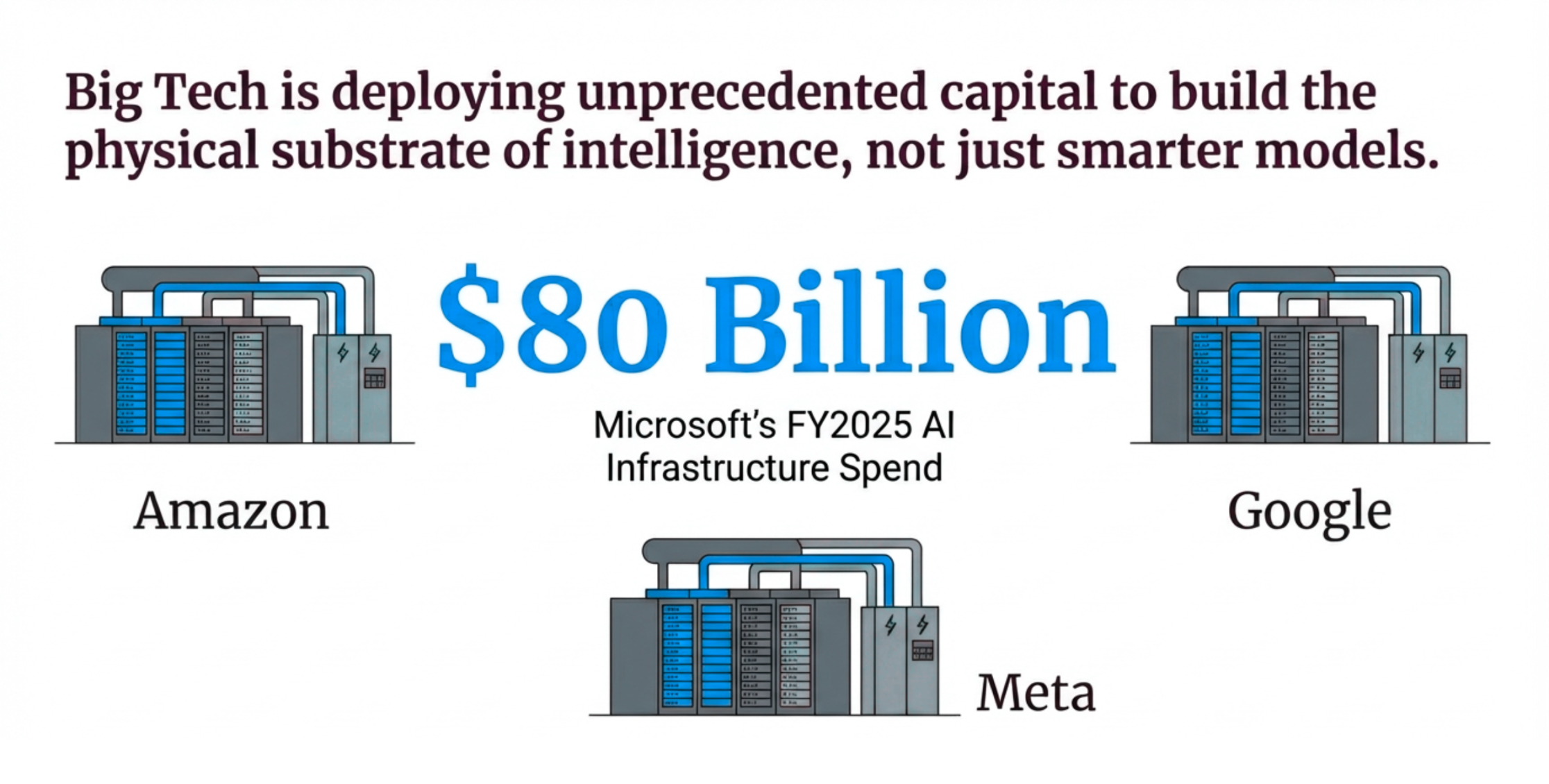

In reality, the capital is not making smarter models.

The models are already capable enough for most enterprise applications. What the industry is racing to construct is the infrastructure required to run those models at the scale enterprise demand requires. Compute capacity, power availability, cooling systems, and high-speed interconnects, the physical foundation that large-scale intelligence depends on.

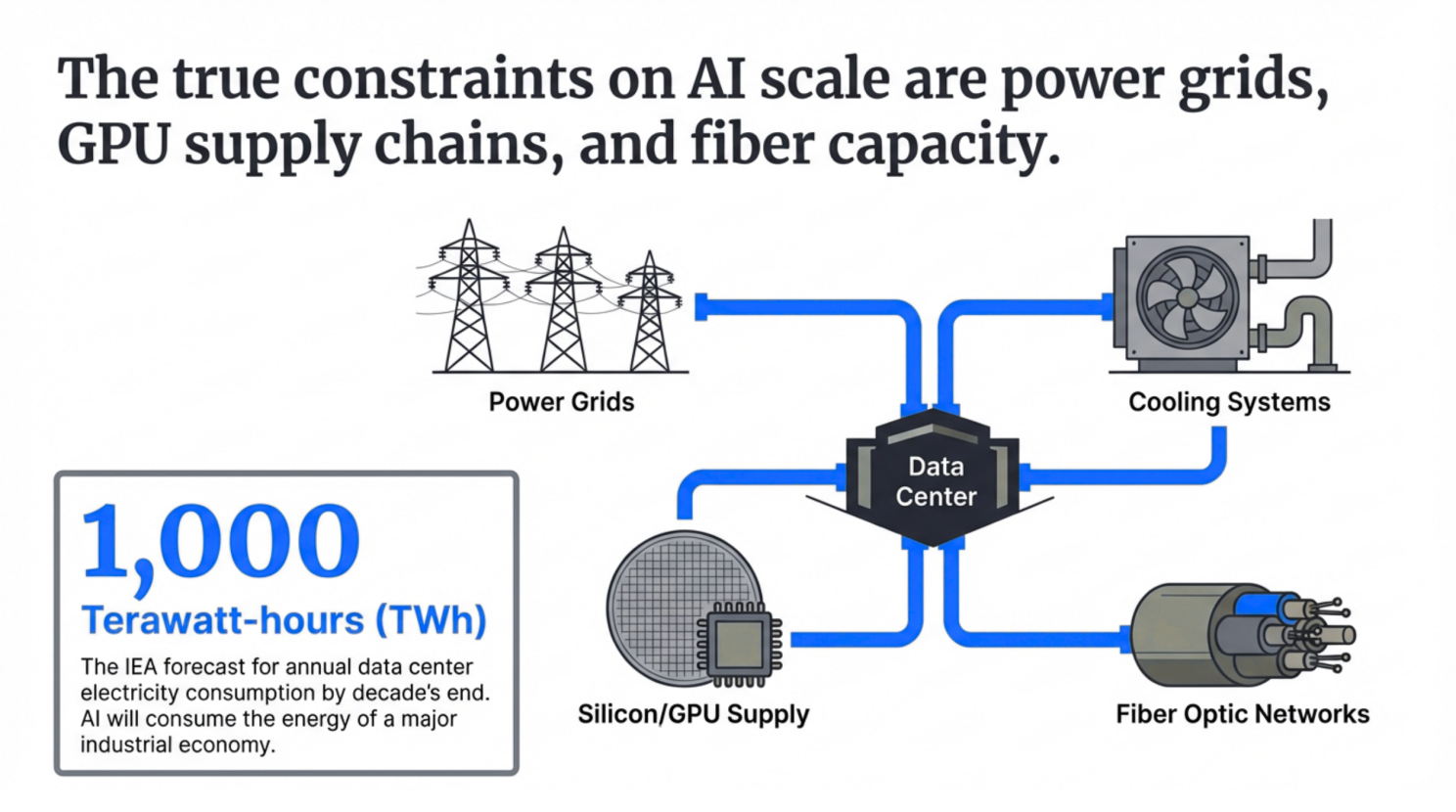

Energy projections reinforce this shift.

Power grids, GPU supply chains, and fiber capacity are emerging as the constraints that will determine which organizations can scale their AI operations and which will encounter limits.

This figure reflects the infrastructure demands of AI systems operating at the scale currently being planned.

The hyperscalers understand this. Their infrastructure investments are designed to ensure that the ceiling for AI deployment remains above them.

The question for every other organization is where that ceiling sits relative to its own ambitions.

The uncomfortable reality is that, for most organizations, their AI infrastructure strategy is already being decided.

It is being shaped through the accumulation of vendor choices, cloud agreements, and architectural decisions made by teams optimizing for immediate delivery timelines.

These engineers and architects are making rational decisions given their constraints. They are optimizing for speed and capability in order to ship products and systems. What they are not optimizing for is long-term unit economics, vendor concentration risk, or the cost structure of an agentic future that may not yet be fully modeled.

By the time infrastructure strategy becomes visible at the executive level, the dependencies are already embedded, switching costs are substantial, and strategic flexibility has narrowed.

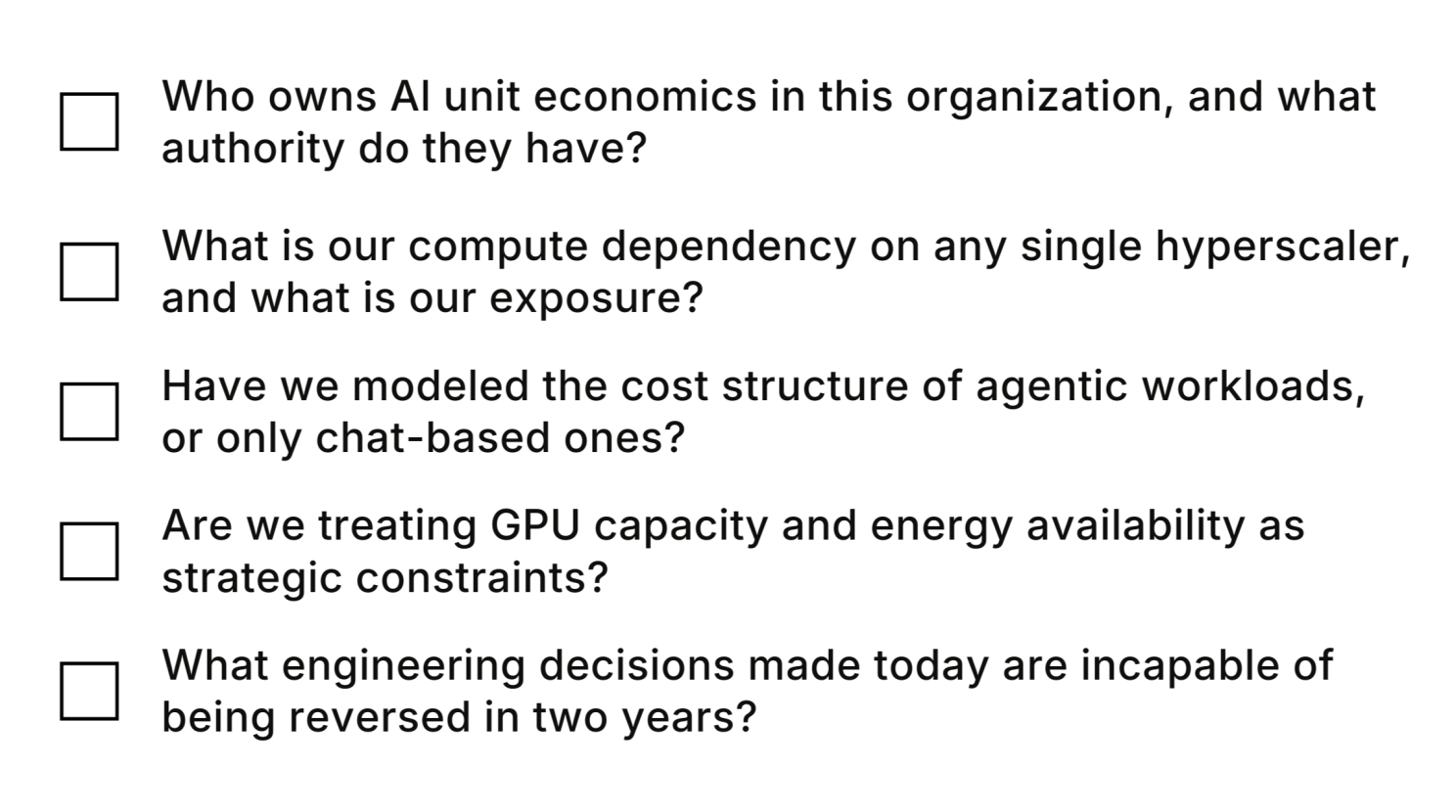

The questions boards should be asking today are straightforward but rarely raised:

These are questions about operating leverage, strategic optionality, and long-term margin structure.

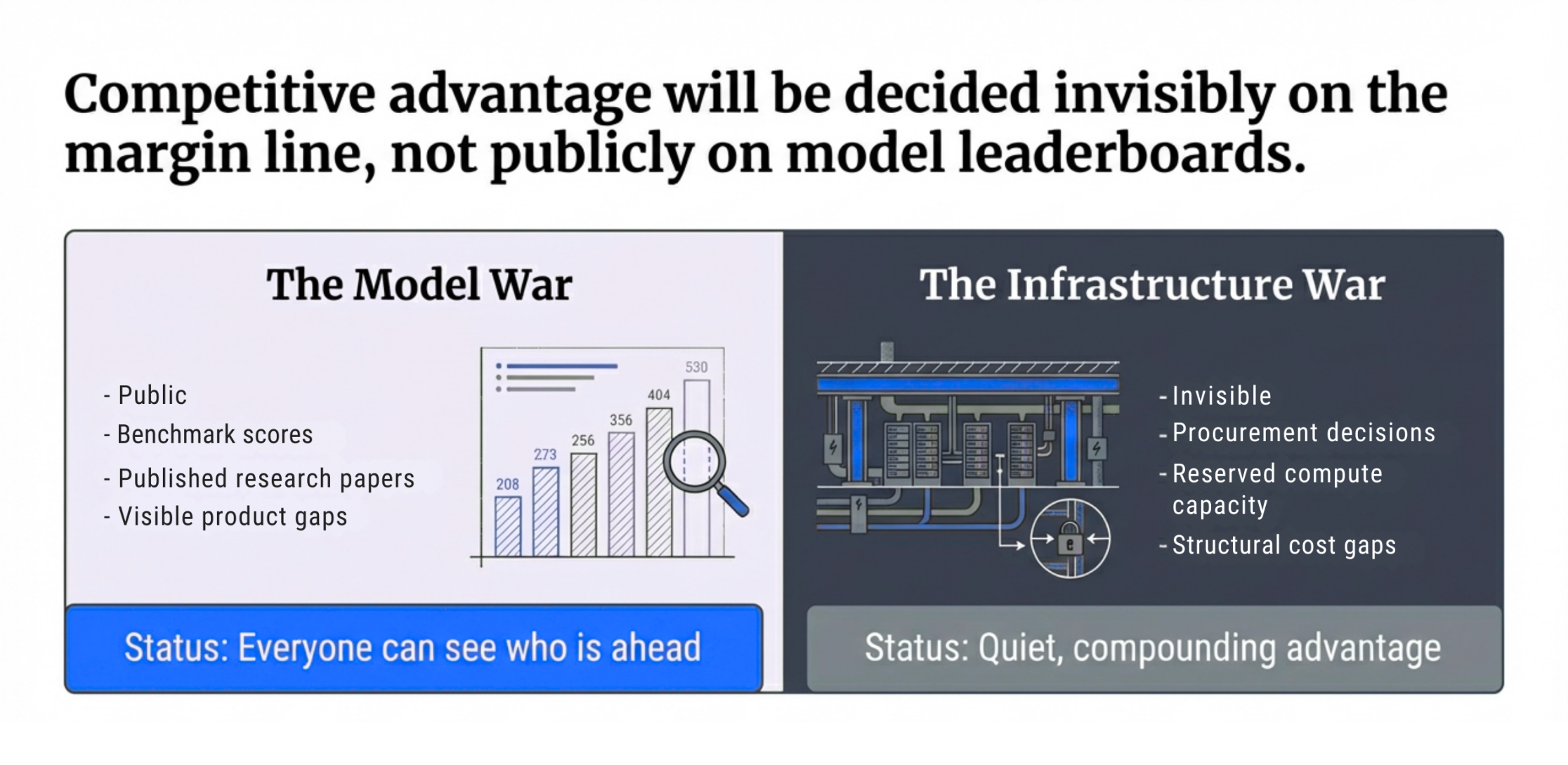

Model capability is a race that everyone can see. Benchmark results are public, research papers are published, and improvements in model performance quickly become visible across the industry.

Infrastructure advantage operates differently. It remains invisible until its effects become impossible to ignore.

You do not notice it when a competitor negotiates long-term compute capacity that structurally lowers their AI operating costs. You do not see it when their agentic architecture is designed for efficiency from the start while others retrofit systems originally built for chat interfaces. And you do not notice it when their board began examining inference economics years earlier while others remain focused on capability demonstrations.

You notice it in financial results. You see it when a competitor can run AI at scale while maintaining healthy margins. You see it when their AI systems become deeply embedded into operations because the cost structure makes that possible.

At that point, the advantage is no longer about product features. It becomes structural.

That is the competition that is actually unfolding.

But once the gap becomes visible, it will be too late to close.

The organizations that ultimately win will not be the ones that purchased the best AI tools. They will be the ones that understood early that running AI is a fundamentally different challenge from buying it.

.png)

Apr 28, 2026

Most AI hiring tools speed up workflows but don’t improve decisions. This article explores why hiring is fundamentally a decision problem, and how KYNAA brings structure, consistency, and intelligence to it.

Mar 31, 2026

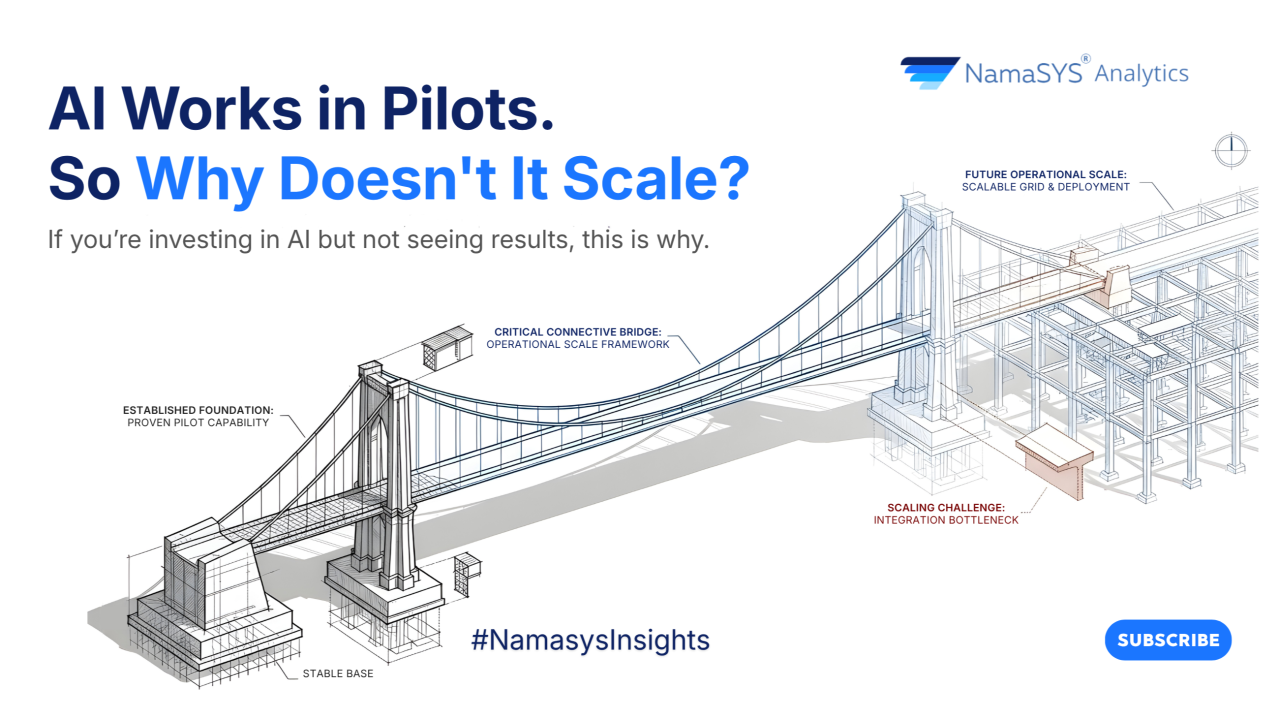

AI success isn’t about better models, it’s about better systems. This article uncovers why most initiatives stall after pilots, and what it takes to convert AI capability into enterprise-wide outcomes.

Bring clarity, efficiency, and agility to every department. With Namasys, your teams are empowered by AI that works in sync with enterprise systems and strategy.