Mar 31, 2026

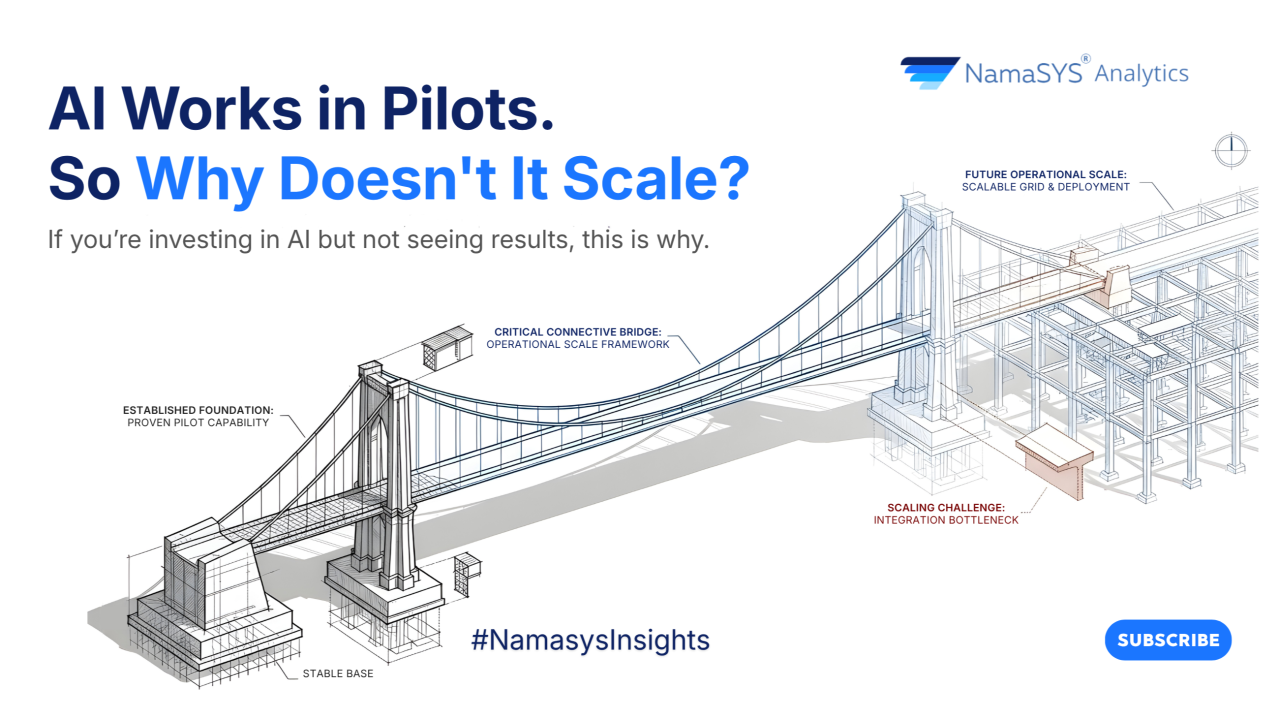

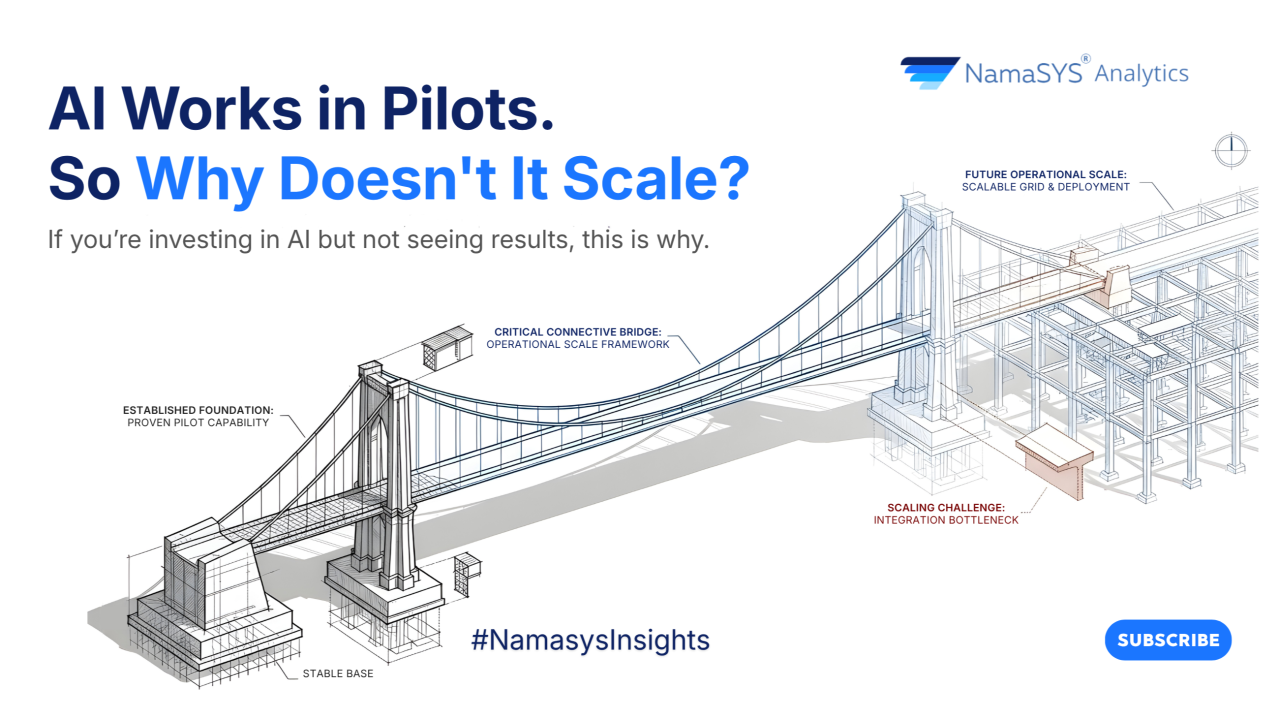

AI success isn’t about better models, it’s about better systems. This article uncovers why most initiatives stall after pilots, and what it takes to convert AI capability into enterprise-wide outcomes.

.png)

Every CHRO today opens the same conversation: "We're modernizing hiring with AI."

The job boards are integrated. The screening tools are stacked. The recruiters have ChatGPT tabs open all day.

And then the quarterly hiring review lands, and the same problems are still on the table. Inconsistent shortlists. Interviewer-dependent decisions. Roles that took 60 days last year still take 60 days.

Despite heavy tooling, the average global time-to-hire still sits around 36–42 days (SHRM), largely unchanged over the last decade.

This is the gap most enterprise AI tools quietly miss. They make recruiters faster. They don't make hiring decisions better.

Most "AI hiring tools" in the market are autocomplete in disguise. They draft job descriptions, summarize resumes, and suggest interview questions. The recruiter still drives every decision. The AI just types faster.

KYNAA inverts that model.

It is built as an agentic decision system, where AI agents execute critical steps like structured interviews and evaluation within a governed workflow and produce a structured, auditable output.

Agentic AI, in this context, means systems that don't just generate content, but take actions across a workflow, make decisions based on defined logic, and produce consistent outputs without human intervention at each step.

The recruiter isn't replaced. The inconsistency is.

The issue isn't tooling. It's standardization.

McKinsey and Harvard Business Review research consistently shows that decision variability, not lack of data, is one of the biggest drivers of inconsistent outcomes in organizations. Hiring is a textbook example.

That's not a hiring inefficiency. That's a governance gap.

CXOs don't need faster recruiters. They need consistent, defensible decisions at scale. That's what an agentic system enforces, same logic, same evaluation structure, same scoring discipline, across every candidate, every time.

It writes the job description itself. Hiring managers describe the role in their own words, and KYNAA converts that into a structured, role-ready JD. That JD is then turned into an evaluation framework, skills, weights, criteria, generated upfront, not improvised during interviews.

It scores resumes on role-fit, not keywords. The model evaluates alignment to the framework, not phrasing tricks in the resume.

It conducts the first-round interview itself. Autonomously. Dynamic questioning, real-time scoring, full transcripts. This matters because structured interviews are up to 2x more predictive of job performance than unstructured ones (Schmidt & Hunter, Psychological Bulletin), yet most organizations still run largely unstructured processes.

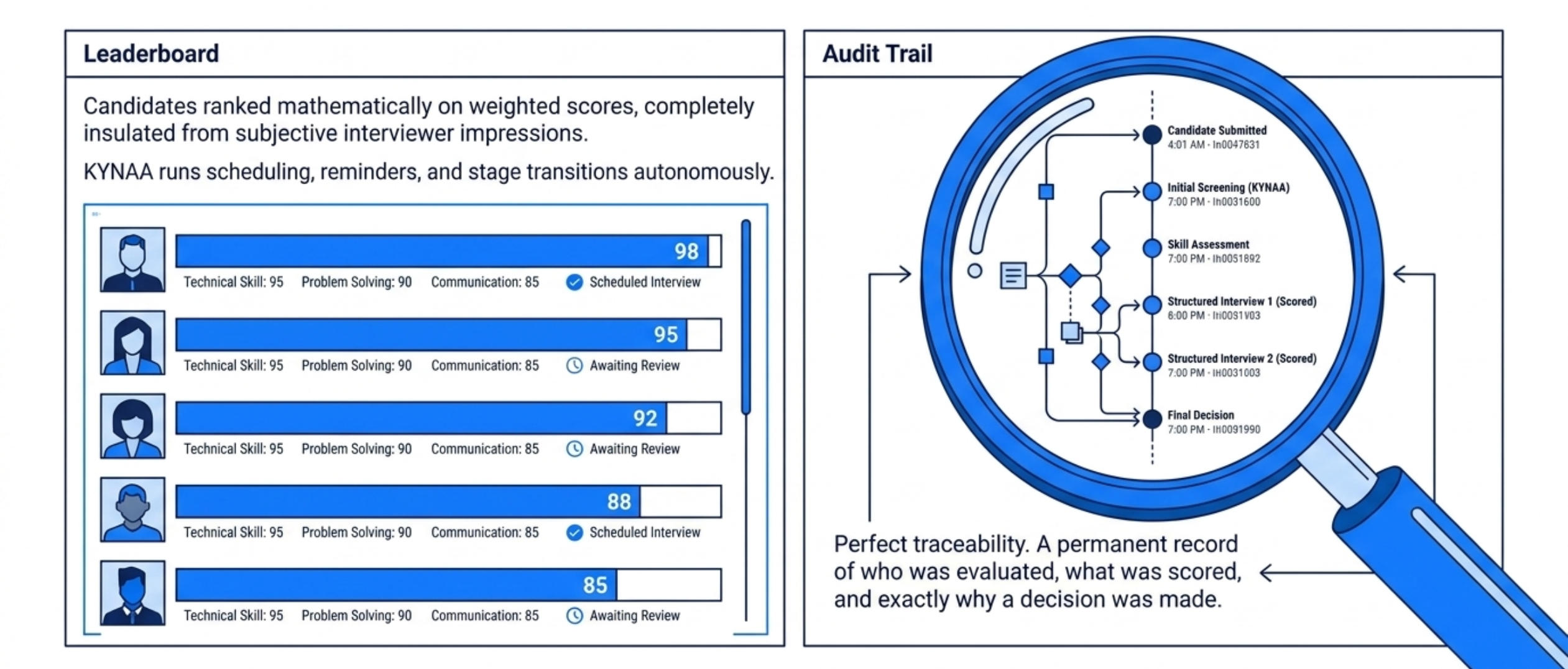

It runs the operational layer: scheduling, reminders, structured feedback capture. It produces a comparable shortlist where candidates are ranked on weighted scores, not interviewer impressions. And it maintains a decision audit trail, who evaluated, what was scored, why a decision was made.

In our work across hiring transformations, this lack of traceability is one of the most consistent gaps we see.

A system like this raises obvious questions.

What about candidate experience? Structured, consistent interviews remove randomness. Candidates are evaluated on the same criteria, not interviewer mood or style.

What about quality at scale? Consistency tends to break the moment hiring volume spikes. KYNAA applies the same evaluation framework to ten candidates or hundreds, without degradation. The system ensures consistent evaluation standards across hiring cycles and volumes.

What about integration? The system is designed to sit alongside existing ATS workflows, not replace them.

The cost of unstructured hiring isn't visible in one report. It compounds, through poor hiring decisions, repeated hiring cycles, and loss of trust in the hiring function itself. A bad hire costs 30–50% of annual salary, per U.S. Department of Labor estimates.

But the bigger cost is quieter: decisions that cannot be explained, processes that cannot scale, outcomes that cannot be trusted.

KYNAA was built as a standalone tool. Hiring is the first module in a broader philosophy.

Namasys Analytics is a consulting-first AI firm that transforms fragmented business processes into structured, outcome-driven decision systems. Most enterprise software was designed to digitize workflows, capture inputs, route them, store outputs. We start a layer deeper: identifying where the actual decisions inside a process live, and rebuilding those decisions as structured, agentic systems that operate consistently at scale.

Hiring was simply the most obvious place to start. High volume. High subjectivity. High business impact.

The future of hiring will not be defined by faster workflows. It will be defined by better decisions.

And the organizations that win will not be the ones hiring more, they will be the ones deciding better.

We’re currently doing a limited set of demos for teams evaluating structured hiring systems. If relevant, feel free to reach out.

Mar 31, 2026

AI success isn’t about better models, it’s about better systems. This article uncovers why most initiatives stall after pilots, and what it takes to convert AI capability into enterprise-wide outcomes.

Mar 17, 2026

Healthcare AI isn’t a technology problem, it’s an operating model problem. This piece reveals why only 2% scale successfully, and what separates stalled pilots from enterprise-wide impact.

Bring clarity, efficiency, and agility to every department. With Namasys, your teams are empowered by AI that works in sync with enterprise systems and strategy.