.png)

Apr 28, 2026

Most AI hiring tools speed up workflows but don’t improve decisions. This article explores why hiring is fundamentally a decision problem, and how KYNAA brings structure, consistency, and intelligence to it.

For the last two years, leadership teams benchmarked AI like it was a product race: model size, capability, demos, leaderboards.

That phase mattered, because we needed to prove intelligence works.

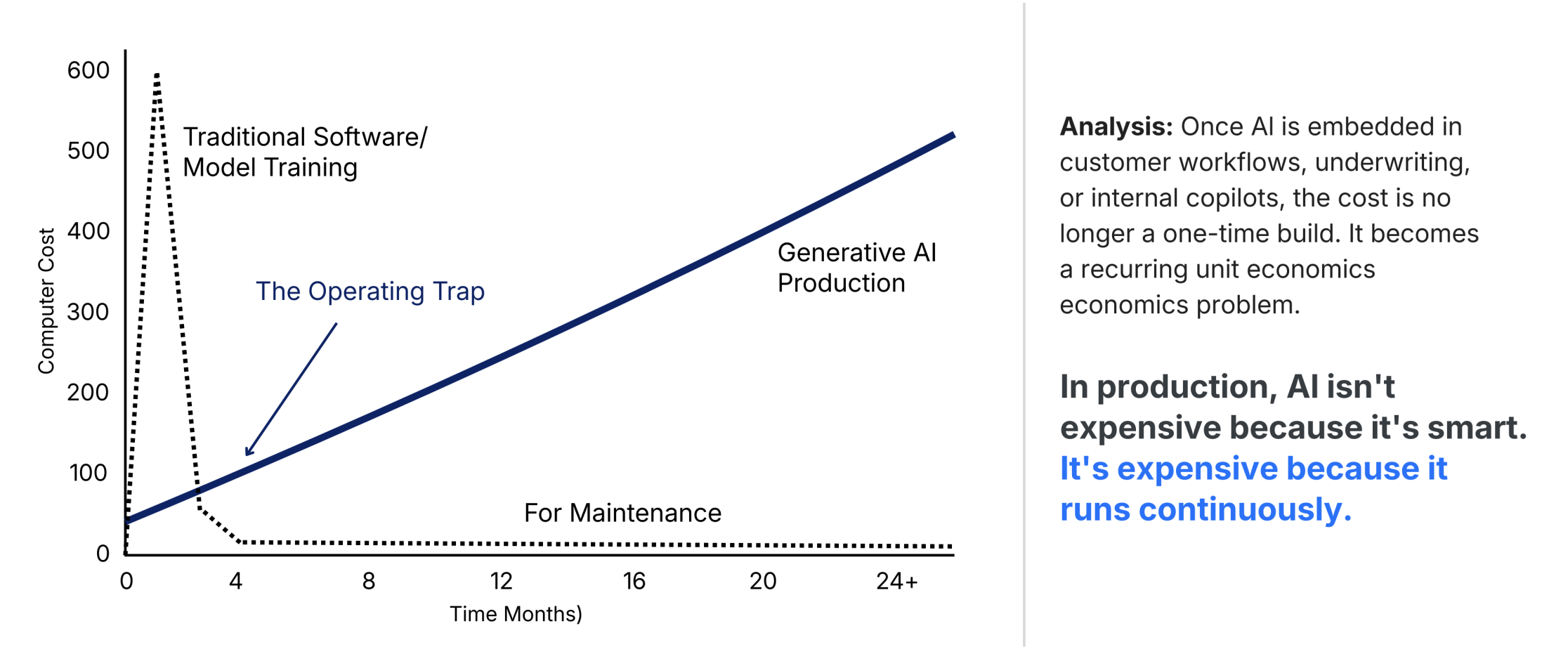

But the phase we’re entering is different. It’s an operating phase. And operating advantages are almost never about “better intelligence.” They’re about cost structure, predictability, and throughput.

In production, AI isn’t expensive because it’s smart. It’s expensive because it runs continuously.

Training is episodic. Inference is structural. Once AI is embedded in customer workflows, underwriting, internal copilots, or agentic operations, cost is no longer a one-time build, it becomes a recurring unit economics problem.

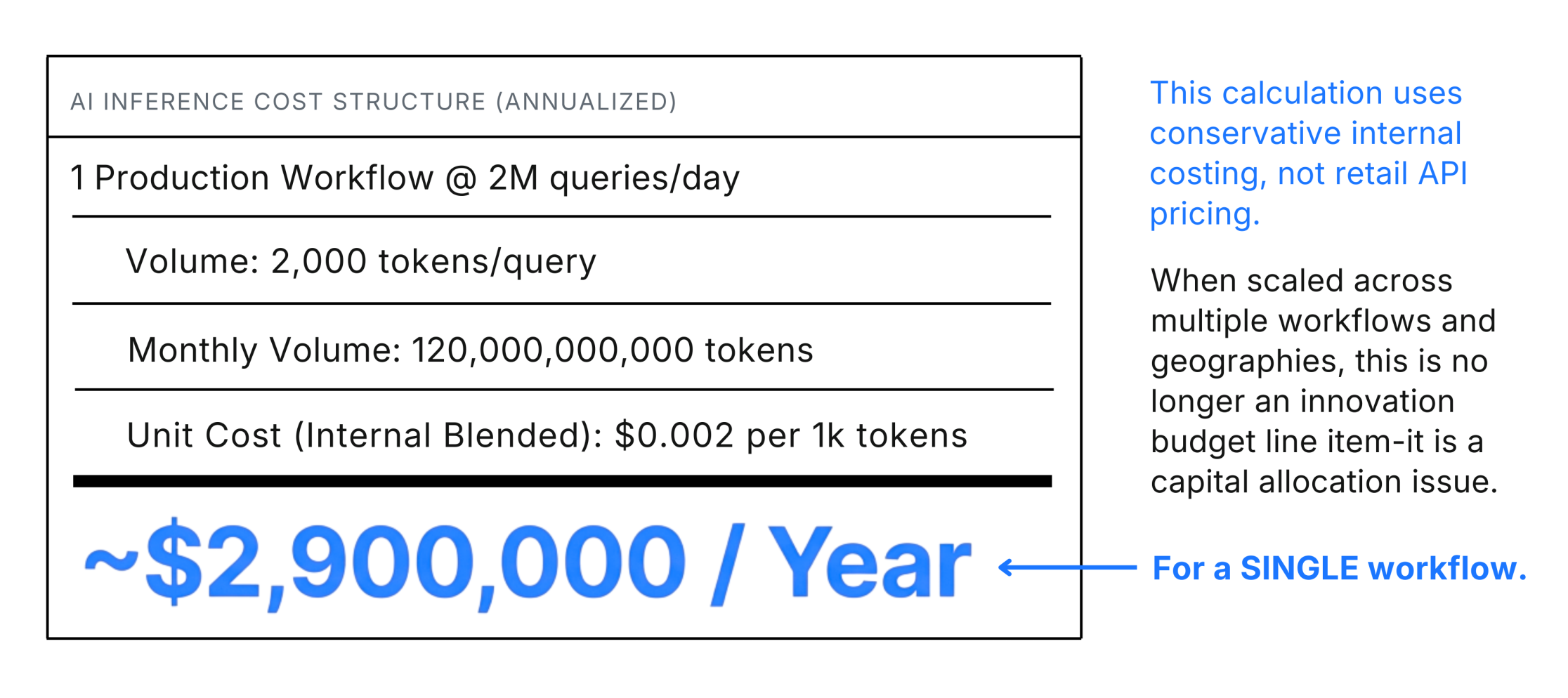

A conservative way to see it : Assume one production workflow doing 2 million queries/day. At ~2,000 tokens per query (input + output + retrieval + tool calls + validation), that’s ~120B tokens/month. Even at a blended internal cost like $0.002 per 1,000 tokens (not retail API pricing), you’re in the ballpark of ~$2.9M/year for a single workflow

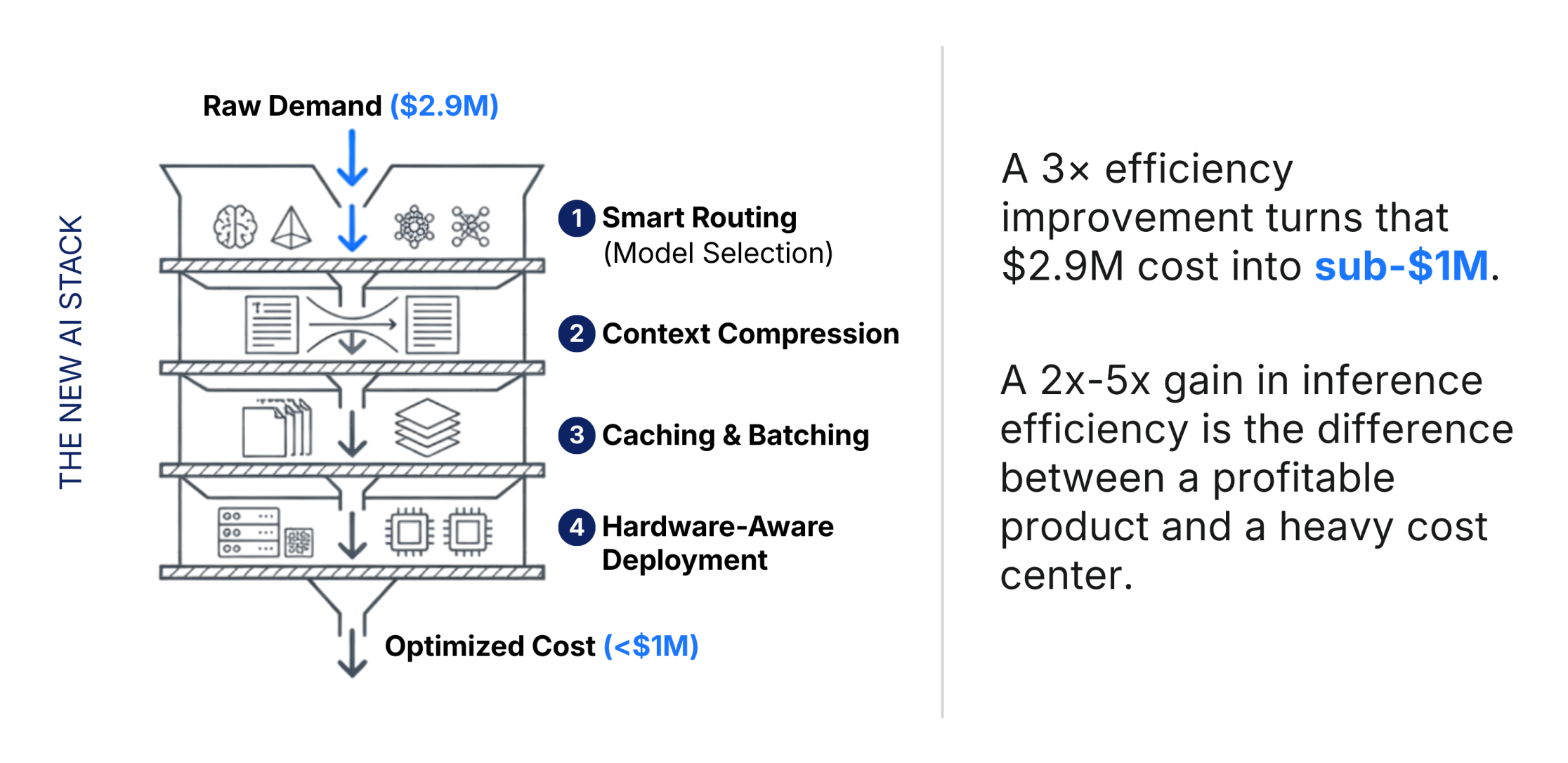

Now here’s the strategic point: a 2×–5× inference efficiency gain is not “optimization.” It’s margin design.

Routing the right requests to the right model. Context compression. Caching. Batching. Hardware-aware deployment. Better agent design (fewer loops, fewer tool calls, tighter retrieval). These are not engineering details. They’re cost architecture.

A 3× efficiency improvement turns ~$2.9M into sub-$1M. That delta compounds across workflows, geographies, and 24/7 coverage. That’s when AI becomes a capital allocation discussion, not an innovation discussion.

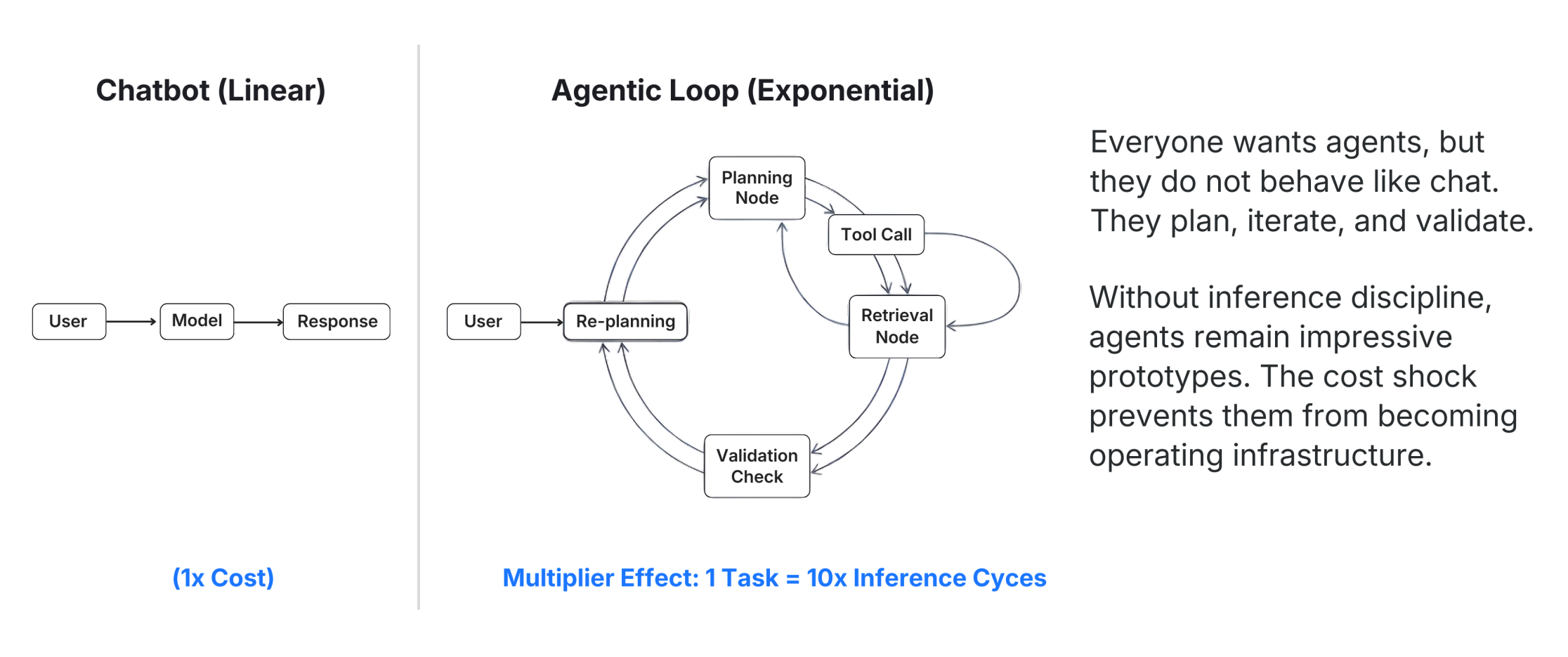

Everyone wants “agentic AI,” but agentic systems don’t behave like chat. They plan, re-plan, call tools, retrieve context, validate outputs, and iterate. One “task” becomes multiple inference cycles.

So the question isn’t “Can we build agents?” The question is: can we afford to run them at scale without cost shock?

Without inference discipline, agents remain impressive prototypes. With inference discipline and observability, they become operating infrastructure.

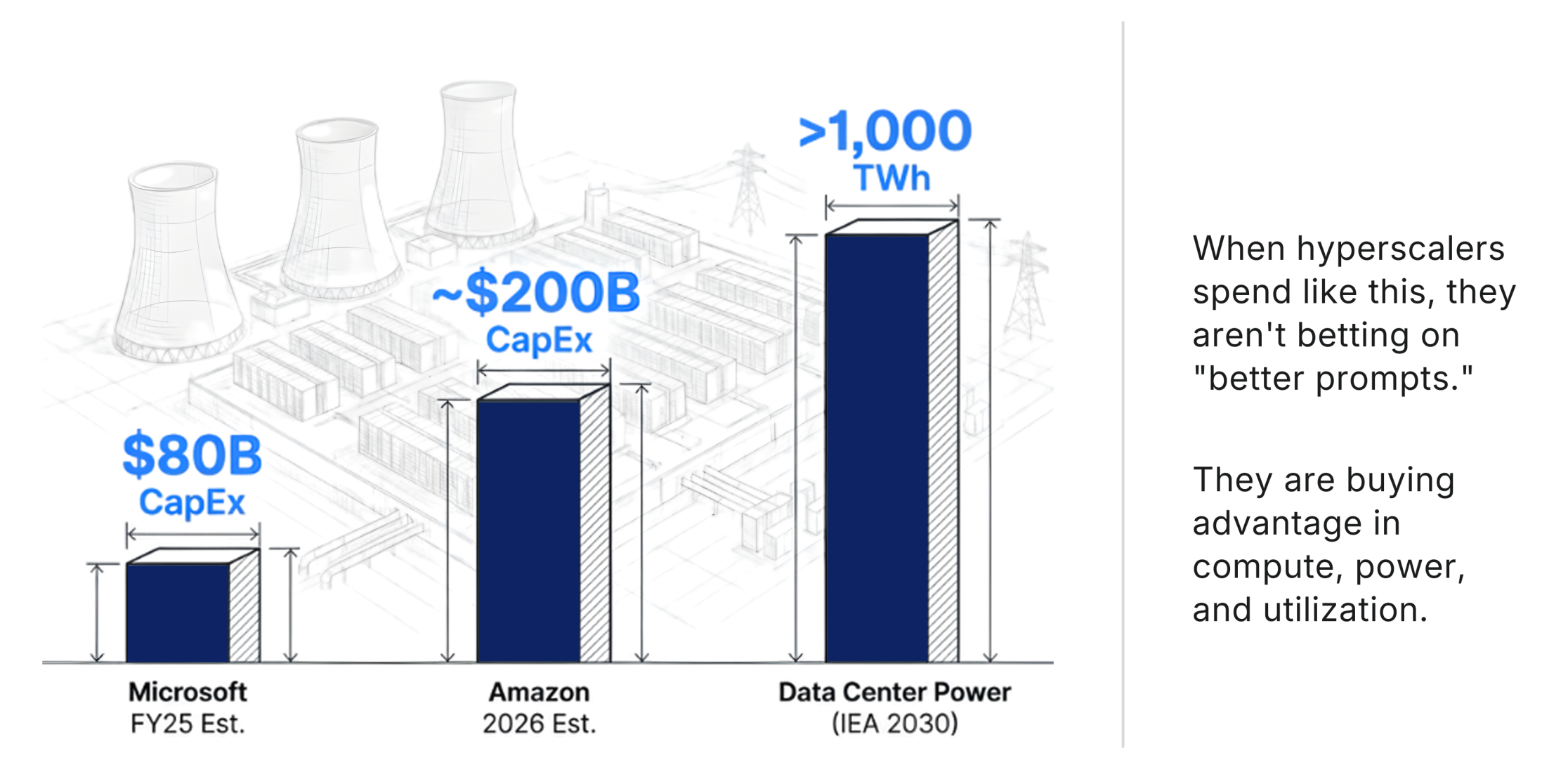

This isn’t theoretical. Look at where the serious money is going. Microsoft has publicly said it was on track to invest roughly $80B in FY2025 to build AI-enabled datacenters for training and deployment.

Amazon’s CEO has been explicit about massive capex ramping toward AI and AWS capacity; reporting points to plans around $200B for 2026 capex largely tied to AWS and AI infrastructure.

And the International Energy Agency is forecasting a steep rise in electricity supply needed for data centres, from about 460 TWh (2024) to over 1,000 TWh by 2030 in its base case, because power is becoming a binding constraint.

When hyperscalers spend like this, they’re not betting on “better prompts.” They’re buying advantage in compute, power, and utilization.

Which is exactly why the next AI moat is less about model IQ and more about cost per unit of inference.

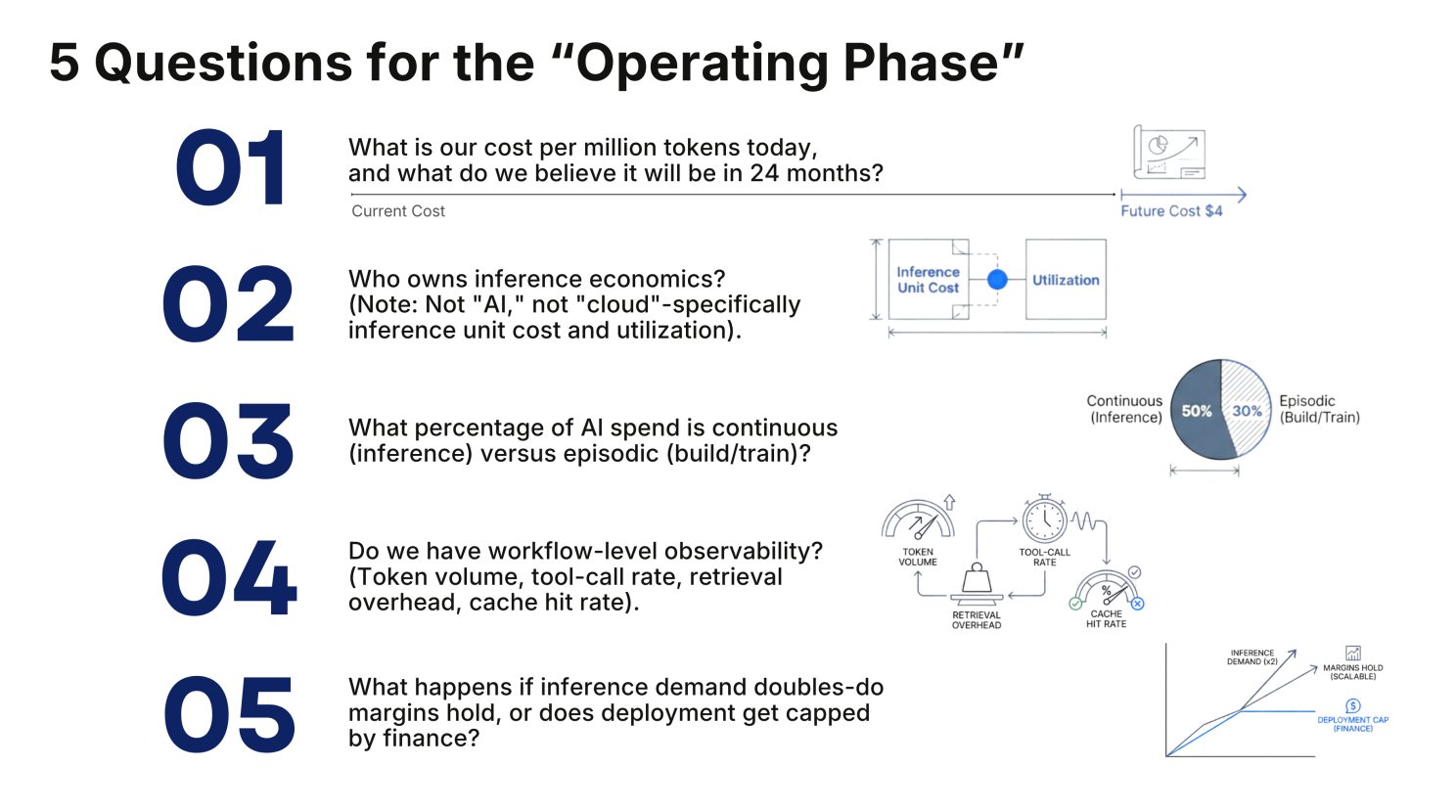

If you’re scaling enterprise AI, these are the questions that matter more than benchmark deltas:

If the answer to ownership is “no one,” that’s not a gap. That’s a structural vulnerability.

Model capability gaps will narrow. Infrastructure discipline gaps will widen.

The first wave proved intelligence works. The next wave will decide whether intelligence can operate at scale, predictably, sustainably, and without power/cost volatility.

That’s where the real AI advantage is moving.

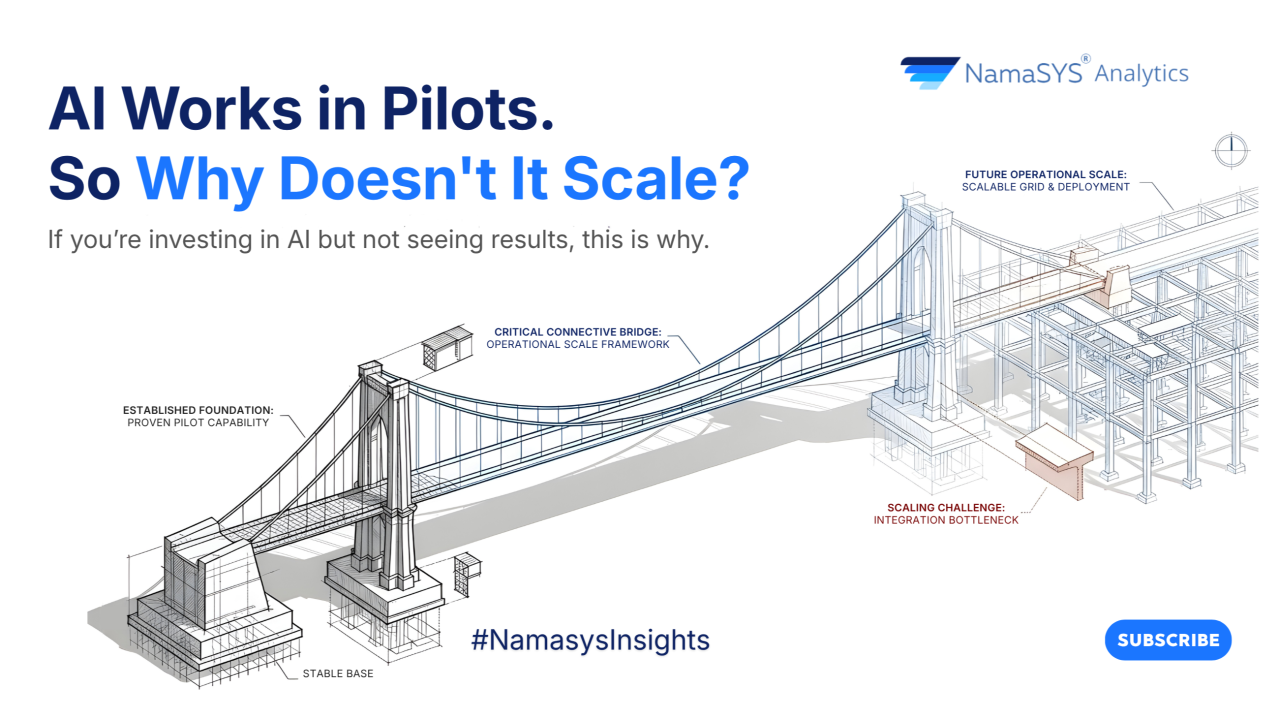

If you’re building beyond pilots, you’ll run into token economics sooner than you think. Better to design for it now.

References:

.png)

Apr 28, 2026

Most AI hiring tools speed up workflows but don’t improve decisions. This article explores why hiring is fundamentally a decision problem, and how KYNAA brings structure, consistency, and intelligence to it.

Mar 31, 2026

AI success isn’t about better models, it’s about better systems. This article uncovers why most initiatives stall after pilots, and what it takes to convert AI capability into enterprise-wide outcomes.

Bring clarity, efficiency, and agility to every department. With Namasys, your teams are empowered by AI that works in sync with enterprise systems and strategy.