.png)

Apr 28, 2026

Most AI hiring tools speed up workflows but don’t improve decisions. This article explores why hiring is fundamentally a decision problem, and how KYNAA brings structure, consistency, and intelligence to it.

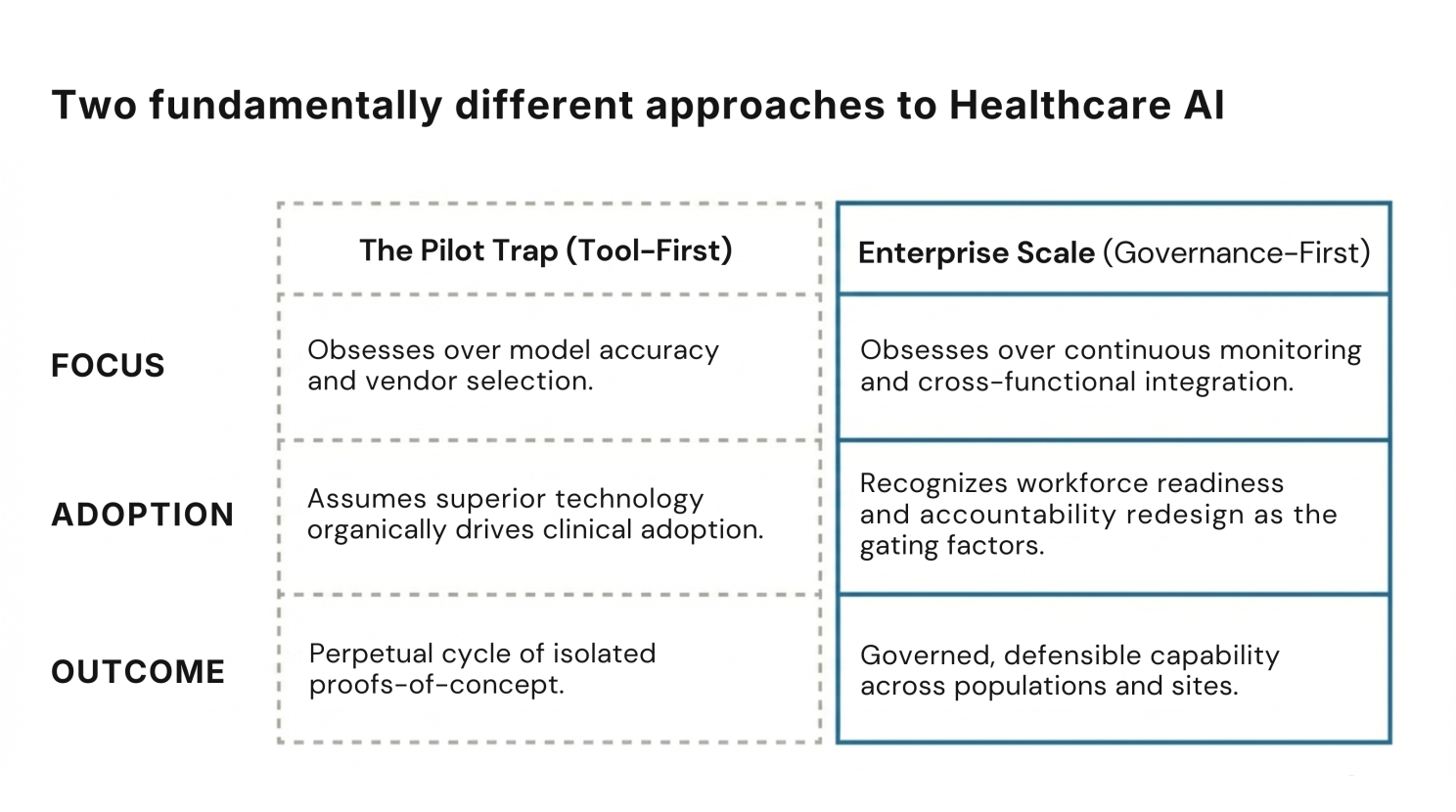

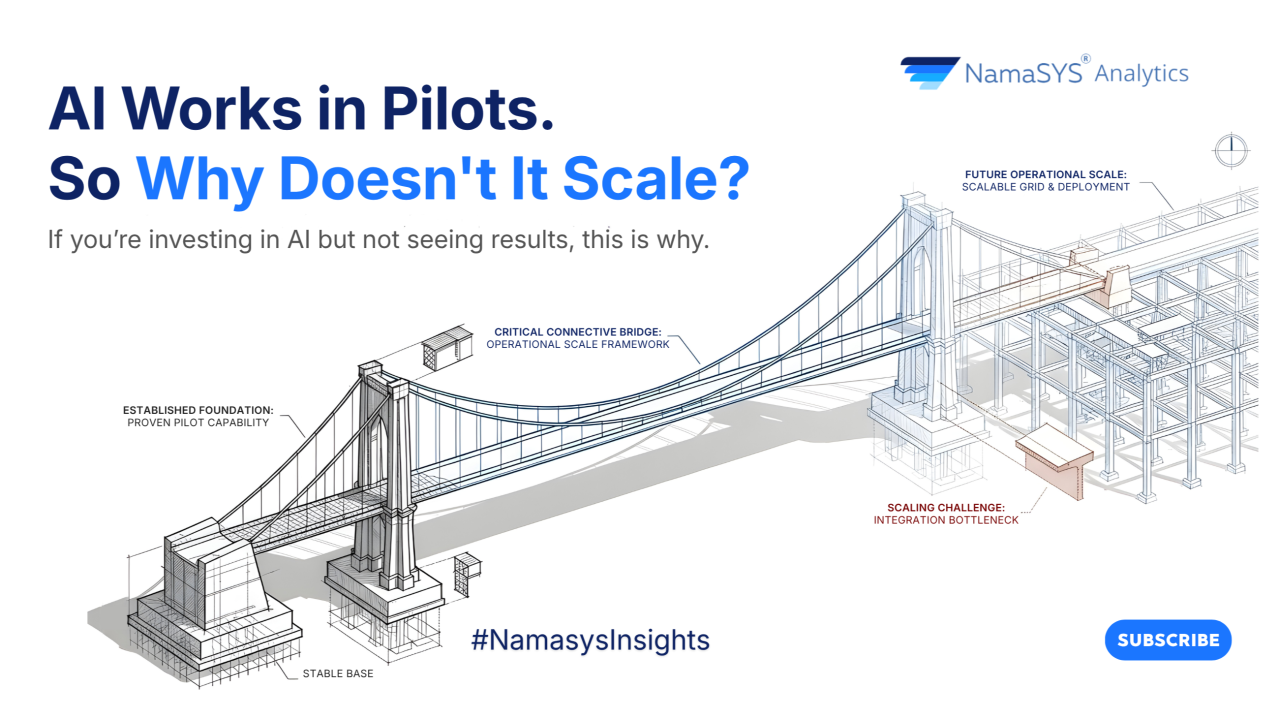

Most healthcare AI initiatives don't fail. They stall. And the difference between the organizations stuck in that stall and the ones achieving genuine enterprise deployment has nothing to do with the quality of their models. It has everything to do with how they're built to run them.

According to a survey of US healthcare leaders, 85% are already exploring or adopting generative AI. But a 2026 global health-system outlook reveals the other side of that number: just 30% are running AI at scale in even select areas, and only 2% have deployed it across the full enterprise.

This is not a technology problem. It is a governance and organizational capability problem. And understanding that distinction is what separates healthcare leaders who will capture AI's value from those who will spend the next three years in pilot purgatory.

AI's clearest ROI in healthcare is clustering in three zones: administrative efficiency, clinical documentation, and select bounded clinical decision support. The numbers, where they exist, are credible.

Ambient scribes, AI that transcribes and drafts clinical notes from patient encounters, are showing genuine clinician impact. A Kaiser Permanente deployment covering 7,000+ physicians found ambient AI scribes saved the equivalent of ~1,800 workdays, with 84% reporting improved patient communication.

A separate multicenter study published in JAMA Network Open showed clinician burnout dropping from 51.9% to 38.8% within 30 days.

On the operational side, industry research indicates that AI can drive significant efficiency gains in payer workflows such as claims management and prior authorization. Health systems are also beginning to project meaningful cost savings from AI-led administrative automation.

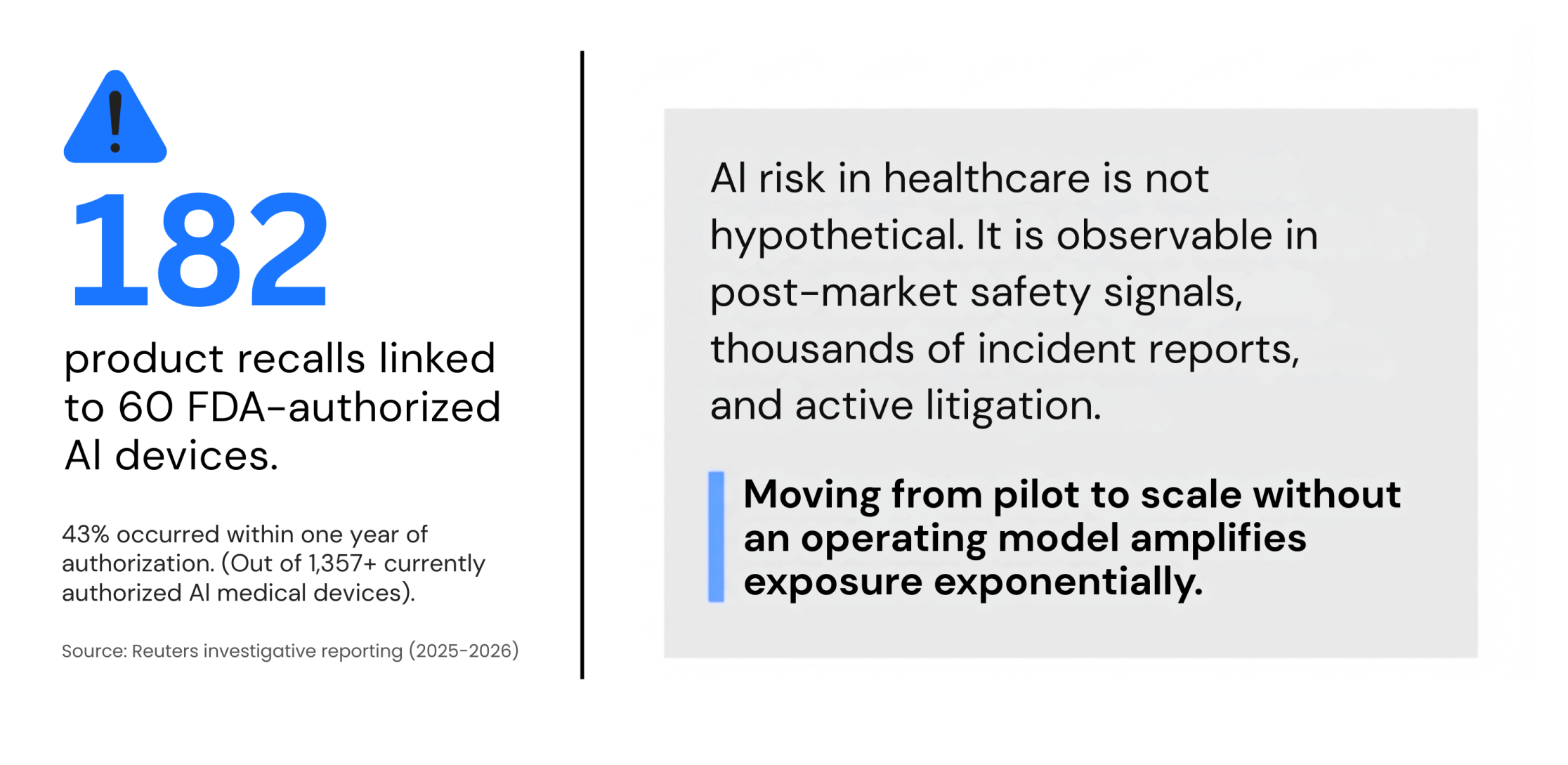

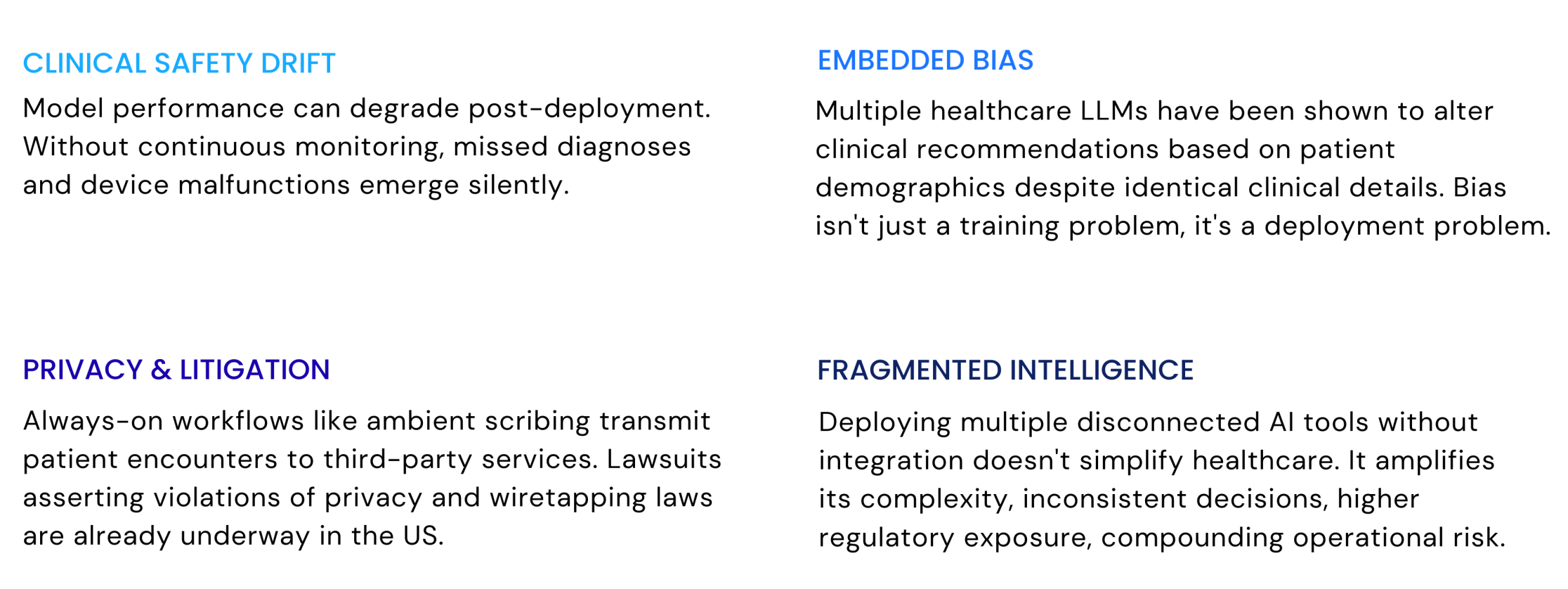

AI risk in healthcare is not hypothetical. It is already observable in post-market signals, documented bias behaviors, and active litigation.

The WHO's six principles - autonomy, safety, transparency, accountability, equity, and sustainability, and the FDA's total product lifecycle framework are not compliance checklists. They are the architecture of a defensible AI program.

NHS England's real-world evaluation model requires multi-site deployment across at least two years before scale. The regulatory direction of travel is clear: govern first, scale second.

The scaling gap is not about model quality. It is about whether an organization can run the full AI lifecycle, risk grading, clinical evaluation, workflow integration, monitoring, and continuous improvement, consistently, across sites and populations.

Workforce readiness is the gating factor most boards are underestimating. Fewer than half of clinicians feel comfortable using AI tools today. 42% worry AI will replace aspects of their job. That trust deficit cannot be outrun by better technology. It has to be earned through governance, transparency, and proof that the humans in the loop still matter.

At Namasys Analytics, we advise healthcare and life sciences organizations on exactly this transition, from proof-of-concept to governed, enterprise-wide AI capability.

The question a healthcare leadership team should be asking is not "which AI should we adopt?" It is "are we built to run AI responsibly, at scale?" Those are different questions. They lead to fundamentally different outcomes.

The 2% who have achieved enterprise-scale deployment didn't move faster. They asked the right question first.

The future of healthcare will not be defined by who adopted AI first. It will be defined by who built the operating model to run it, responsibly, at scale, with outcomes their board can defend.

Sources: McKinsey & Company - Generative AI in Healthcare Survey (2024), Deloitte - Global Health Care Outlook 2026, JAMA Network Open - Multicenter study on ambient AI and clinician burnout (2025), Kaiser Permanente / The Permanente Medical Group - Ambient AI deployment analysis (reported via Advisory Board, 2026), Reuters - Investigative reporting on AI-enabled medical devices and recalls (2025–2026), World Health Organization - Ethics and Governance of AI for Health, U.S. Food and Drug Administration - AI/ML-Based Software as a Medical Device (SaMD) Framework, NHS England - AI Evaluation Framework for Health and Care

.png)

Apr 28, 2026

Most AI hiring tools speed up workflows but don’t improve decisions. This article explores why hiring is fundamentally a decision problem, and how KYNAA brings structure, consistency, and intelligence to it.

Mar 31, 2026

AI success isn’t about better models, it’s about better systems. This article uncovers why most initiatives stall after pilots, and what it takes to convert AI capability into enterprise-wide outcomes.

Bring clarity, efficiency, and agility to every department. With Namasys, your teams are empowered by AI that works in sync with enterprise systems and strategy.